Personal Project

Instrument Data Pipeline

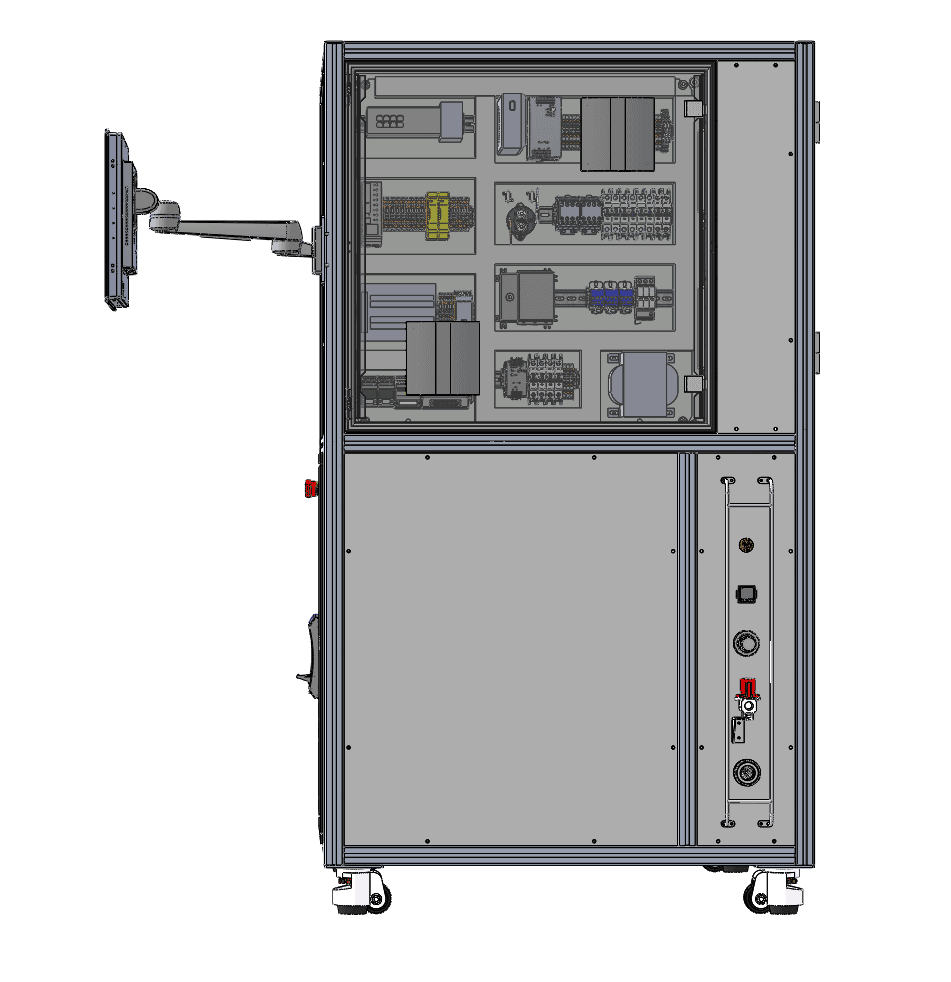

Production data pipeline that ingests and normalizes electrical and environmental test data across 5 hardware testing stages — burn-in, HiPot, in-circuit, isolation resistance, and laser profilometry. Built automated ETL pipelines with statistical process control (SPC) charting, capability analysis (Cp/Cpk), and quality report generation that replaced manual R&D data review.

Designed the ETL pipeline architecture to handle heterogeneous test data from 5 distinct hardware testing stages, each with different file formats, measurement units, and sampling rates: Burn-In (continuous temperature/current monitoring over 24-72 hour cycles), HiPot (high-voltage insulation testing with pass/fail thresholds), In-Circuit Test (component-level electrical verification), Isolation Resistance (megohm-level measurements with temperature compensation), and Laser Profilometry (surface topology measurements with micron-level precision).

Built SPC (Statistical Process Control) charting with X-bar/R charts, control limit calculation (UCL/LCL from ±3σ), and Western Electric run rules for detecting process drift before it produces out-of-spec parts. Implemented capability analysis computing Cp and Cpk indices against engineering specifications — providing a single number indicating whether a process is capable of meeting tolerances. Automated quality report generation with pass/fail summaries, trend visualization, and flagged anomalies replaced hours of manual R&D data review per test run.

The pipeline uses Python with pandas for data ingestion and transformation, matplotlib for SPC chart rendering, and a modular architecture where each testing stage has its own parser module but shares common SPC and reporting infrastructure. Designed for extensibility — adding a new test stage requires only writing a parser that outputs the standardized intermediate format.